7.1.3 PRED_LANDING Model

Purpose

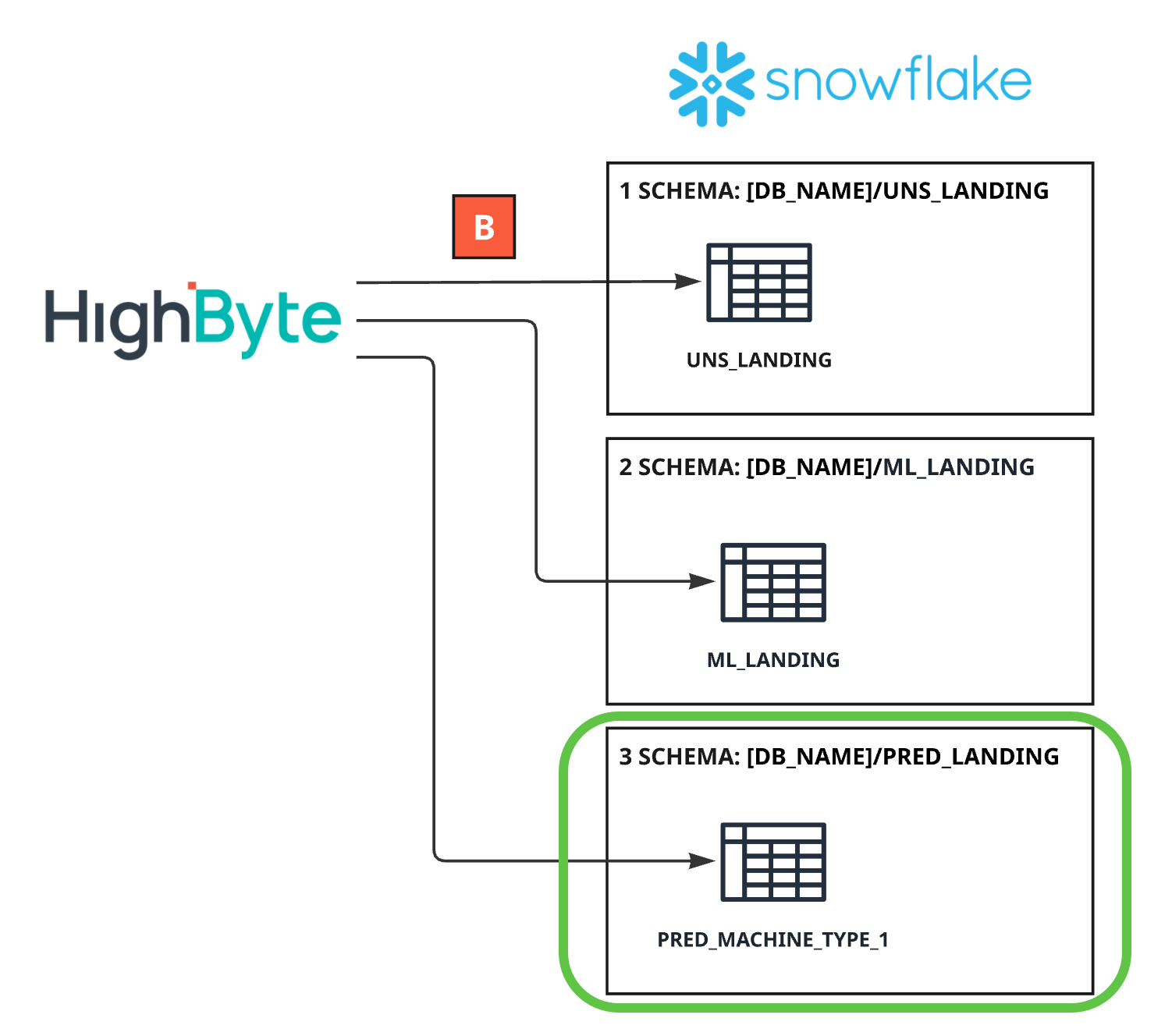

The PRED_LANDING schema represents the inference result event layer within the Snowflake serving model.

Its role is to store contextualised, versioned prediction outputs generated by the production inference pipeline (Pipeline J).

This layer enables:

Historical monitoring of model behaviour

Model performance evaluation

Feedback loops for retraining

Auditability of inference decisions

PRED_LANDING mirrors the payload-centric architecture used in UNS_LANDING and ML_LANDING to ensure structural consistency across the ML lifecycle.

Design Intent

The PRED_LANDING model is designed around the following principles:

1. Inference Event Representation

Each record represents one inference event aligned to a specific feature evaluation timestamp. Predictions are stored as structured event payloads rather than wide, rigid column tables.

2. Full Lineage & Reproducibility

Each inference record preserves:

MODEL_NAME

MODEL_VERSION

FEATURE_VERSION

This ensures deterministic traceability between:

Feature dataset (ML_LANDING)

Model artifact

Prediction outcome

3. Structural Symmetry

PRED_LANDING follows the same event-based, payload-centric structure as:

UNS_LANDING (contextualized OT events)

ML_LANDING (feature events)

This enables architectural consistency and simplified governance.

4. Separation from Operational Systems

PRED_LANDING is not used for real-time control or SCADA decision logic. Real-time actions occur via MQTT distribution. PRED_LANDING is the historical and analytical persistence layer for inference outcomes.

Data Grain & Structure

Grain

1 row per asset per inference evaluation event. Each record corresponds to a single feature evaluation point.

Core Identifiers

EVENT_TS – timestamp representing the feature evaluation time

ASSET_PATH – unique asset identifier

ISA-95 routing context (SITE_ID, AREA_ID, LINE_ID, CELL_ID)

MACHINE_TYPE

MODEL_OBJECTIVE

MODEL_NAME

MODEL_VERSION

FEATURE_VERSION

Payload-Centric Storage Model

PRED_LANDING uses a single structured payload to store inference outputs.

Example conceptual structure:

CREATE OR REPLACE TABLE TMA_APPOMAX_DB.PRED_LANDING.INFERENCE_EVENTS (

EVENT_TS TIMESTAMP_NTZ(9),

SITE_ID VARCHAR,

AREA_ID VARCHAR,

LINE_ID VARCHAR,

CELL_ID VARCHAR,

ASSET_PATH VARCHAR,

MACHINE_TYPE VARCHAR,

MODEL_OBJECTIVE VARCHAR,

MODEL_NAME VARCHAR,

MODEL_VERSION VARCHAR,

QUALITY VARCHAR,

PAYLOAD VARIANT

);

NOTE:

The PAYLOAD column contains structured inference output, such as:

{

"prediction": 1,

"score": 0.87,

"decision": "ANOMALY",

"threshold": 0.75,

"confidence": 0.91,

"feature_reference": {

"event_ts": "2026-02-06T10:01:00Z",

"feature_version": "v3.2"

}

}

This structure:

Allows flexible evolution of the output schema

Avoids rigid DDL changes

Supports additional metrics (e.g., SHAP values, probability vectors)

Maintains compatibility across model versions

Example Data: PRED_LANDING

Lineage & Traceability

To ensure reproducibility and auditability:

MODEL_VERSION identifies the deployed model artifact

FEATURE_VERSION identifies the feature logic used

EVENT_TS links back to the corresponding ML_LANDING feature event

This enables:

Model performance analysis

Post-hoc validation

Root-cause analysis

Retraining workflows

Usage Pattern

PRED_LANDING is used for:

Model performance monitoring

Drift detection

Retraining dataset assembly

KPI tracking (e.g., precision, recall, defect detection rate)

Enterprise reporting on predictive initiatives

It is not intended for:

Operational HMI consumption

Real-time control logic

Direct MES integration

Relationship to Other Models

Upstream:

Receives inference-aligned feature events derived from ML_LANDING

Downstream:

Feeds enterprise analytics and ML monitoring workflows

Supports feedback loops for feature and model improvement

PRED_LANDING completes the closed-loop architecture:

Contextualised OT Events → Feature Events → Inference Events → Model Improvement

Summary

The PRED_LANDING model provides:

Versioned

Contextualized

Payload-centric

Reproducible inference event records

It ensures architectural consistency across the ML lifecycle while maintaining flexibility for evolving model outputs and analytical requirements.